Surveys are a fundamental tool in humanitarian operations. They inform decisions, shape response strategies, and provide the data needed to assess needs, allocate resources, and measure impact. Yet, despite their importance, there is a recurring pattern in how surveys are designed and implemented – one that often underutilizes the expertise of Information Management (IM) professionals.

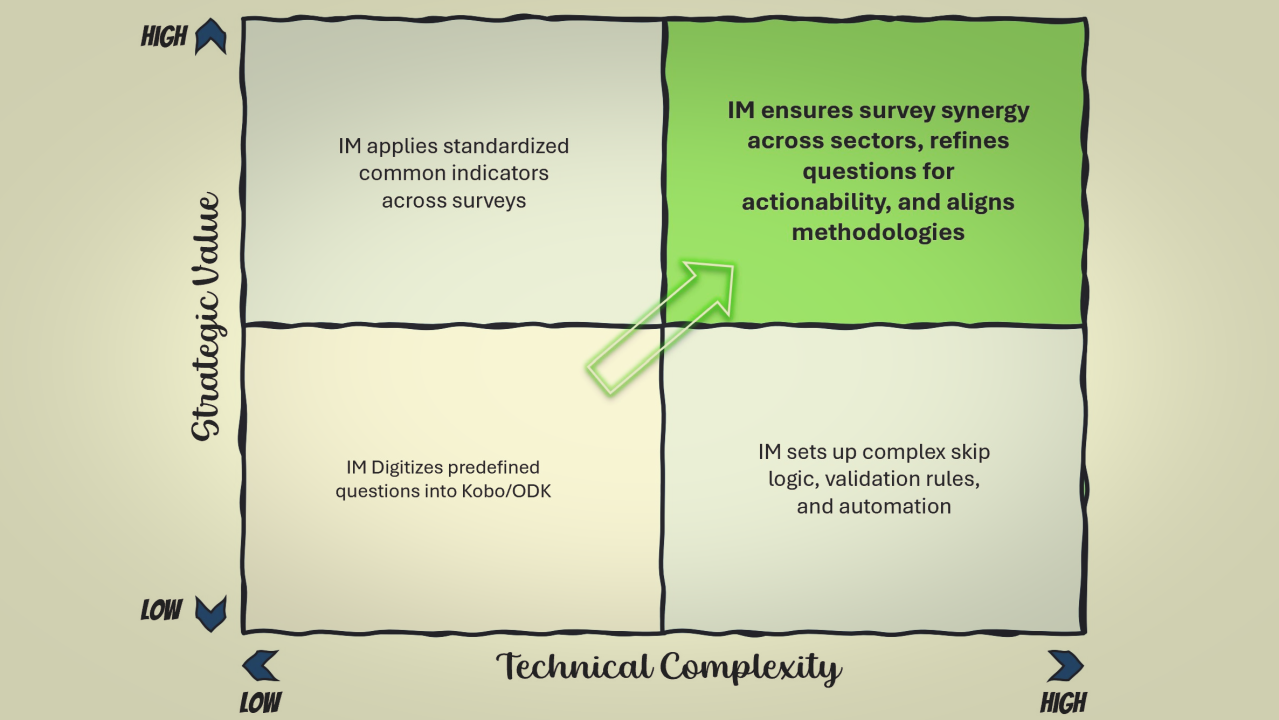

In my opinion, in many humanitarian organizations, the role of IM in surveys is largely technical: designing and coding forms in Kobo, ODK, or Ona based on predefined questions. And most of the time, I see that IM professionals are taking the easy path, and not leaving their comfort zone. This process is straightforward – managers and coordinators define the survey content, and IM specialists transform it into a digital format. Data is then collected, processed, and analyzed based on these initial inputs.

This approach is common, but it raises a fundamental question: Are we making the most of IM expertise? If the primary function of IM in survey work is simply to digitize forms, then we must acknowledge that modern platforms have made this task increasingly accessible. Many non-IM specialists can now create surveys using user-friendly interfaces with drag-and-drop form builders. If the IM role remains limited to this, then it risks becoming merely an administrative function rather than a strategic one.

Moving Beyond Execution: The Strategic Role of IM in Surveys

To truly leverage IM expertise, we need to rethink its role in the survey process. Information Management is not just about translating questions into digital formats, it is about ensuring that the data collected is standardized, meaningful, and actionable. This shift in approach begins with three key areas: standardization, synergy, and questioning the questions.

The Power of Standardization

One of the biggest challenges in humanitarian data collection is inconsistency. Different surveys within the same organization often use different terminologies, response options, and data structures, making it difficult to merge datasets or conduct meaningful cross-sector analysis. Without standardization, data remains fragmented, requiring significant effort to clean and harmonize before it becomes usable.

A well-structured approach to standardization addresses this issue from the outset. For example, when designing surveys at different levels – household, individual, or community – there should be a clear set of core variables that remain consistent across assessments. If each survey defines its own version of key indicators, comparability becomes nearly impossible.

The same principle applies to administrative classifications. A single humanitarian operation may conduct multiple surveys over time, and if each one uses a different method for recording locations – sometimes using official administrative codes, sometimes free text – aggregating the data later becomes a painstaking process. By implementing a standardized approach to geographic and categorical data, IM professionals ensure that datasets can be easily integrated, compared, and reused, making future analysis far more efficient.

Creating Synergy in Data Collection

In most humanitarian responses, multiple data collection activities take place simultaneously. Needs assessments, post-distribution monitoring, sectoral surveys, and partner-led evaluations often overlap, yet they are frequently conducted in silos. Each unit or agency designs its own survey based on its specific needs, without necessarily considering what other teams are doing.

This is where IM professionals have a unique advantage. Because they work across different teams and sectors, they have a bird’s-eye view of the overall data landscape. They see what has already been collected, what is currently being gathered, and what is planned for the near future. This knowledge allows them to identify opportunities for collaboration and integration – reducing duplication, increasing efficiency, and ensuring that different datasets complement each other rather than existing in isolation.

At first glance, a Protection team conducting an assessment may have little in common with a Shelter team gathering data. But ultimately, both units are trying to reach and support the same communities. Instead of running two separate exercises, IM professionals can facilitate discussions to align efforts, ensuring that assessments are designed in a way that maximizes the use of resources while minimizing survey fatigue for affected populations. Sometimes, a simple two-hour coordination meeting can lead to significant time and cost savings, as well as richer, more comprehensive data.

Questioning the Questions

Perhaps the most powerful contribution IM professionals can make to the survey process is ensuring that the right questions are being asked in the first place. Designing a survey is not just about listing the information we want to collect – it’s about ensuring that every question serves a clear purpose and leads to actionable insights.

Many surveys are developed under tight deadlines, often using pre-existing templates or past assessments as a starting point. While this can be efficient, it can also result in questions that are outdated, irrelevant, or not well thought out. IM specialists, with their deep exposure to operational data, are in a strong position to challenge and refine these questions before they are finalized.

A good starting point is to ask: Is this question actionable? If a survey collects data on a particular issue, will the results lead to a concrete, feasible plan to address it? If not, the question may not be necessary. Nowadays, data collection should not be an academic exercise – it should directly contribute to decision-making and programmatic adjustments.

Another key consideration is whether the response options are well-structured. If a multiple-choice question is not designed to be mutually exclusive and collectively exhaustive (MECE), it can lead to ambiguous responses that complicate analysis. Poorly structured response categories often force respondents into inaccurate choices, undermining the reliability of the data.

Finally, sometimes the most fundamental data points are overlooked. While designing an assessment, I once asked a Protection coordinator why sex and age data were missing from the questionnaire. Her response was one of realization – she immediately recognized the oversight and later admitted that it should have been included from the start. Such small but critical interventions can significantly improve the quality of the data collected.

Shifting the Mindset: From Technical Support to Data Leadership

The role of IM in humanitarian surveys should not be limited to digitizing pre-written questionnaires. IM professionals bring a wealth of expertise in data structuring, integration, and analysis – expertise that is too valuable to be confined to form-building.

By focusing on standardization, coordination, and refining survey content, IM can move from being seen as an execution function to being recognized as a critical enabler of high-quality, decision-ready data.

This shift requires both advocacy and action. IM professionals must actively engage in survey discussions from the earliest stages, ensuring that data collection is designed with usability, comparability, and strategic relevance in mind. It also requires a willingness to challenge assumptions, ask difficult questions, and push for better methodologies – not just in how data is collected, but in how it is ultimately used.

At its core, this is not just about improving surveys. It’s about ensuring that humanitarian decisions are based on reliable, well-structured, and meaningful data – data that has been collected with intentionality and expertise, rather than just convenience.

This is where IM professionals can and should add the most value.